Top Data Center Management Issues

Top Data Center Management Issues

The backbone of every successful network is the data center. Without it, emails would not be delivered, data would not be stored, and hosting multiple sites would not be possible.

Spending on data center systems is anticipated to reach 212 billion US dollars in 2022, an increase of 11.1% from the year before. ~ Statista

Data centers often support thousands of small and large individual networks and can run several other business-critical applications. Many things however can go wrong within the data center, so you should know what exactly to look out for. Here are some significant data center issues and how to fix them.

What is a Data Center?

A data center is an industrial facility where people store, process, and transmit computer data.

A data center is typically a large complex of servers and associated devices, as well as the physical building or buildings that house them. Data centers are usually integrated with other services, such as telecommunications and cloud computing.

Unlike general-purpose facilities such as warehouses and office buildings, data centers are generally dedicated to one user. The major types of data centers are:

- Private enterprise data centers, which corporations or other private organizations own;

- Public enterprise data centers, which government agencies own;

- Community enterprise data centers (CEDCs), which groups of individuals own;

- Hybrid enterprise data centers (HEDCs), which combine private and public ownership.

Challenges of Data Center Management

Challenges of Data Center Management

The data center is one of the most critical components of an organization’s infrastructure. With the growing demand for cloud services and business agility, the data center has become one of the most complex systems in any enterprise.

The increasing complexity is a result of numerous factors, such as:

1. Maintaining Availability and Uptime

The primary focus of any IT organization is to ensure that its services are available at all times. This means they need to have a disaster recovery plan in place in case there is a failure within the system.

Technology Advancement

Managing data centers has become more complex due to technological advancements. Various new technologies have been introduced into the market that require efficient management for their practical use. State-of-the-art systems require proper maintenance and management to deliver the desired results. This can be difficult if the required expertise is unavailable within an organization.

2. Energy Efficiency

The cost of powering an entire building can be very high. Therefor it makes sense for an organization to invest in new technology and equipment that reduces power consumption while still performing at an acceptable level.

3. Government Restrictions

Data centers are becoming critical for businesses, but various regulations have restricted their operations in certain countries. For example, there are some countries where it is illegal to store data within their borders. This makes it difficult for businesses to operate within those countries because they have no real options other than moving their servers elsewhere or hiring local staff who can handle their cybersecurity.

4. Managing Power Utilization

Data centers require a lot of power to run their operations smoothly and efficiently. If not managed properly, this could lead to wasted energy consumption, increasing costs significantly over time. To avoid this, organizations should invest in energy-efficient equipment like rack-mounted UPS (uninterruptible power supplies) systems.

Monitoring software should also be installed that will alert companies when something goes wrong so they can react quickly and prevent any potential damage caused by power failure or overloads in the electrical grid.

5. Recovery From Disaster

Data centers have seen an increase in disasters caused by hurricanes and earthquakes as well as man-made disasters like power outages or fires. These events can destroy or even compromise equipment and systems that will take weeks, possibly months to repair or replace. This can leads to losses in productivity and revenue if critical servers or storage devices are affected.

Tips to Overcome Challenges in Data Center Management

Taking the time to ensure the building is safe, your personnel are knowledgeable about cyber security prevention, and you satisfy compliance standards goes a long way in protecting your assets from bad actors. ~Shayne Sherman, CEO of TechLoris.

Here are some tips to help you overcome common challenges in managing your data center:

1. Audit Your Security Posture Regularly

The first step in overcoming data center management challenges is regularly auditing your security posture. This will give you an idea of where you stand and allow you to identify your vulnerabilities before they become threats. You can do this by using a third-party assessment service or hiring a qualified person to assess your current situation and have them provide recommendations.

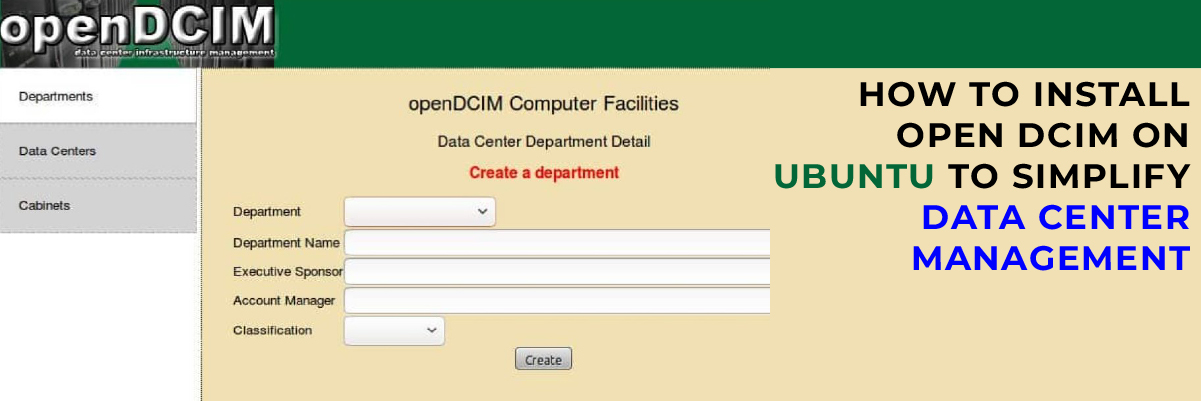

2. Use a DCIM System to Manage Uptime

A DCIM (Data Center Infrastructure Management) helps you to identify issues before they become problems by providing visibility into the health of your equipment. This allows you to proactively address issues before they impact operations or cause downtime.

3. Scheduled Equipment Upgrades

Scheduled upgrades ensure minimal downtime during planned upgrades while also ensuring that any unforeseen issues are resolved before significantly affecting operations.

4. Implement Data Center Physical Security Measures

Using these measures will allow you to control who has access to your facility and what they can do once inside. They also help to limit unauthorized access by preventing cyber-criminals from entering through any possible open doors or windows.

5. Use the Right Tools to Secure Your Data and Network

You must ensure that your network is secure when it comes to data security. This means using the right tools and resources to protect your network from cyber threats. For example, you can install a firewall to block attacks or malware from entering into your system.

Final Words

Data centers are far from being stationary. New challenges are emerging while old ones are still evolving due to technological innovation and changes to data center infrastructures. Spending on data center management solutions is increasing due to other difficulties in addition to managing power, data storage, and load balancing.

Protected Harbor offers the best-in-class data center management with a unique approach. You can expect expert support with 24/7 monitoring and advanced features to keep your critical IT systems running smoothly. Our data center management software enables us to deliver proactive monitoring, maintenance, and support for your mission-critical systems.

We focus on power reliability, Internet redundancy, and physical security to keep your data safe. Our staff is trained to manage your data center as if it were our own, providing reliable service and support.

Our data center management solutions are tailored to your business needs, providing a secure, compliant, reliable foundation for your infrastructure. Contact us today to resolve your data center issues and switch to an unmatched data service solution.